Constrained optimization#

This page demonstrates how to minimize constrained functions. It covers both inequality and equality constraints. It assumes that the user is already familiar with unconstrained optimization.

import numpy as np

import matplotlib.pyplot as plt

from smt_optim import minimize

# utility method to help with plotting 2d functions

from smt_optim.utils.plot_2d import get_plot2d_data

Constrained optimization of a 2D function#

Defining the test problem#

The following example illustrates how to optimize the Modified Branin test function when subject to a single inequality constraint. This can be formulated as:

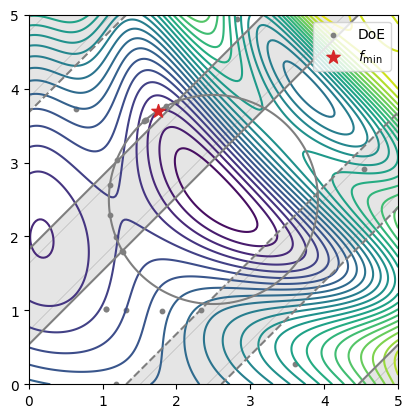

The cell below defines the objective function and its constraint. The figure plots the Modified Branin function. The shaded area represents the unfeasible region defined by the constraint.

# defines the objective function to minimize

def modified_branin(x):

X1 = 15 * x[0] - 5

X2 = 15 * x[1]

return (1*(X2-(5.1/(4*np.pi**2))*X1**2+(5/np.pi)*X1-6)**2+10*(1-1/(8*np.pi))*np.cos(X1)+10)+5*x[0]

# defines the inequality constraint

def simple_constraint(x):

return -x[0]*x[1] + 0.2

# defines the problem bounds

bounds = np.array([

[0, 1],

[0, 1],

])

XX, YY, Z = get_plot2d_data(modified_branin, bounds, 201)

_, _, C = get_plot2d_data(simple_constraint, bounds, 201)

fig, ax = plt.subplots()

ax.contour(XX, YY, Z, levels=25)

ax.contour(XX, YY, C, levels=[0], colors="C7")

ax.contourf(XX, YY, np.where(C<=0, np.nan, C), levels=0, colors="C7", alpha=0.2)

ax.set_aspect("equal")

plt.show()

Starting the optimization#

The easiest way to begin constrained Bayesian optimization is to use the minimize method. Three arguments must be provided:

objective: the function to minimize;design_space: the function boundary;constraints: the constraint definitions.

Since the objective parameter expects a list, place the objective function in a list. Providing the design space argument with an np.ndarray assumes the design space is entirely continuous.

In the cell below, we first define the constraint. The optimization starts by calling the minimize method. This method returns a State object, from which we can retrieve the final DoE, as well as the best feasible objective value.

constraint = [

{

"fun": [simple_constraint],

"upper": 0., # equivalent to: g(x) <= 0

}

]

state = minimize(

[modified_branin],

bounds,

constraints=constraint,

max_iter=10,

driver_kwargs={"seed": 0},

)

iter budget fmin rscv fidelity gp_time acq_time

1 6 5.47552e+01 0.000e+00 1 0.031 0.101

2 7 5.47552e+01 0.000e+00 1 0.031 0.086

3 8 3.21644e+01 0.000e+00 1 0.041 0.080

4 9 3.21644e+01 0.000e+00 1 0.041 0.080

5 10 3.21644e+01 0.000e+00 1 0.039 0.179

6 11 6.94315e+00 0.000e+00 1 0.042 0.164

7 12 5.62262e+00 0.000e+00 1 0.037 0.351

8 13 5.58270e+00 0.000e+00 1 0.036 0.480

9 14 5.57568e+00 0.000e+00 1 0.042 0.384

10 15 5.57568e+00 0.000e+00 1 0.048 0.554

We can retrieve the best function sample using the get_best_sample class method. This returns a Sample object containing data such as:

x: the design pointobj: the objective value atxcstr: the constraint values atxeval_time: the elapsed time to sample the objective and the constraints.

The Sample object also contains some metadata:

iter: the iteration at which the function was sampledbudget: the budget after sampling the functionrscv: the Root Square Constraint Violation

Furthermore, the get_best_sample class method accepts a ctol argument which specify the maximum RSCV tolerated. In the code cell below, we demonstrate how the value passed to ctol affects the best sample returned.

print("####### high constraint tolerance -> returns a lower objective value #######")

best_sample = state.get_best_sample(ctol=1.)

print(best_sample)

print("####### low constraint tolerance -> returns a higher objective value #######")

best_sample = state.get_best_sample(ctol=1e-4)

print(best_sample)

####### high constraint tolerance -> returns a lower objective value #######

======= sample data =======

x = [0.12739234 0.74589931]

obj = [1.9711074]

cstr = [0.10497814]

eval_time = [3.23000131e-06 3.89001798e-07]

------- meta data -------

iter = 0

budget = 4

fidelity = 0

rscv = 0.10497814310624284

===========================

####### low constraint tolerance -> returns a higher objective value #######

======= sample data =======

x = [0.96763933 0.2066893 ]

obj = [5.57567936]

cstr = [-6.91440957e-07]

eval_time = [6.62000093e-06 1.39599797e-06]

------- meta data -------

iter = 9

budget = 14

fidelity = 0

rscv = 0.0

===========================

Plotting the results#

The code snippet below exports all the design points evaluated during the optimization process and plots them against the test problem. The best sample is marked with a star.

x_doe = state.dataset.export_as_dict()["x"]

y_doe = state.dataset.export_as_dict()["obj"]

fig, ax = plt.subplots()

ax.contour(XX, YY, Z, levels=25)

ax.contour(XX, YY, C, levels=[0], colors="C7")

ax.contourf(XX, YY, np.where(C<=0, np.nan, C), levels=0, colors="C7", alpha=0.2)

# plots all the design points evaluated

ax.scatter(x_doe[:, 0], x_doe[:, 1], color="C7", s=10, label="DoE")

# plots the best design point

ax.scatter(best_sample.x[0], best_sample.x[1], color="C3", marker="*", label=r"$f_\min$", s=100, zorder=50)

ax.legend()

ax.set_aspect("equal")

plt.show()

Multiple constraints (equality and inequalities)#

Defining the test problem#

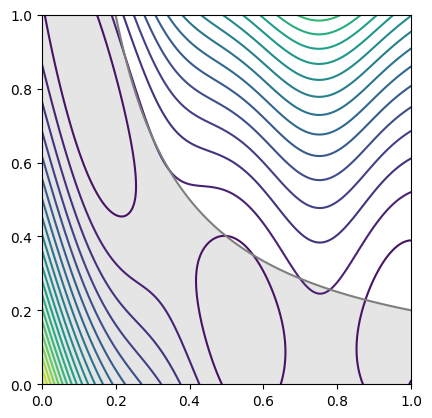

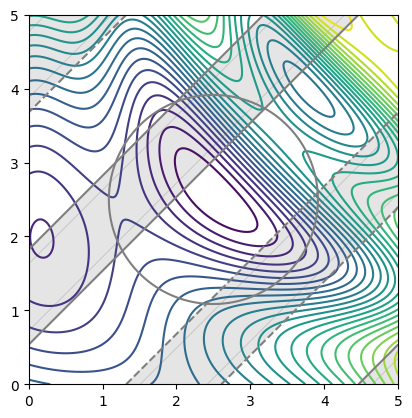

The following example illustrates how to set up an optimization problem with multiple constraint types. It shows how to optimize the Sasena test function with one equality constraint and two inequality constraints. The problem can be formulated as:

# defines the objective function to minimize

def sasena(x):

return 2+0.01*(x[1]-x[0]**2)**2+(1-x[0])**2+2*(2-x[1])**2+7*np.sin(0.5*x[0])*np.sin(0.7*x[0]*x[1])

# defines the equality constraint

def eq_constraint(x):

return (x[0]-2.5)**2+(x[1]-2.5)**2-2

# defines the inequality constraint

def ineq_constraint(x):

return -np.sin(x[0]-x[1]-np.pi/8)

# defines the problem bounds

bounds = np.array([

[0, 5],

[0, 5]

])

XX, YY, Z = get_plot2d_data(sasena, bounds, 201)

_, _, C_eq = get_plot2d_data(eq_constraint, bounds, 201)

_, _, C_ineq = get_plot2d_data(ineq_constraint, bounds, 201)

fig, ax = plt.subplots()

# draws the objective

ax.contour(XX, YY, Z, levels=25)

# draws equality constraint

ax.contour(XX, YY, C_eq, levels=[0], colors="C7")

# draws the inequality constraints

ax.contour(XX, YY, C_ineq, levels=[-0.8, 0.8], colors="C7")

ax.contourf(XX, YY, np.where(C_ineq<=0.8, np.nan, C_ineq), levels=0, colors="C7", alpha=0.2)

ax.contourf(XX, YY, np.where(C_ineq>=-0.8, np.nan, C_ineq), levels=0, colors="C7", alpha=0.2)

ax.set_aspect("equal")

plt.show()

Starting the optimization#

The first step is to define the constraints using a list of dictionaries. Each dictionary specifies the constraint callable and the feasible bounds.

For an equality constraint, the “equal” key sets the value at which the constraint must equal.

For an inequality constraint, the keywords “lower” and “upper” correspond to the lower and upper bounds that define the feasible domain.

Note that even if the problem is defined with only two constraint functions, there are actually three constraints in total (one for the equality and two for the inequalities). A single surrogate model will be generated for both inequality constraints, effectively using a single model to represent the lower and upper bounds.

constraints = [

{

"fun": [eq_constraint],

"equal": 0. # equivalent to: g(x) == 0.

},

{

"fun": [ineq_constraint],

"lower": -0.8, # equivalent to: g(x) >= -0.8

"upper": 0.8, # equivalent to: g(x) <= 0.8

}

]

state = minimize(

[sasena],

bounds,

constraints=constraints,

max_iter=20,

driver_kwargs={"seed": 0},

)

iter budget fmin rscv fidelity gp_time acq_time

1 6 6.28530e+00 2.329e+00 1 0.046 1.395

2 7 6.32374e+00 2.302e+00 1 0.048 1.108

3 8 7.51122e+00 1.617e+00 1 0.066 0.964

4 9 9.92290e+00 7.452e-01 1 0.062 0.981

5 10 1.23896e+01 2.580e-01 1 0.054 1.439

6 11 5.91810e+00 1.330e-01 1 0.064 0.380

7 12 5.91810e+00 1.330e-01 1 0.061 0.419

8 13 6.20722e+00 1.128e-01 1 0.059 2.624

9 14 6.29169e+00 1.974e-03 1 0.065 2.655

10 15 6.32559e+00 2.120e-04 1 0.082 3.384

iter budget fmin rscv fidelity gp_time acq_time

11 16 6.32559e+00 2.120e-04 1 0.074 3.391

12 17 6.32559e+00 2.120e-04 1 0.090 2.118

13 18 3.43870e+00 1.171e-04 1 0.102 2.743

14 19 4.75297e+00 5.051e-05 1 0.095 2.550

15 20 3.07097e+00 1.336e-05 1 0.086 2.518

16 21 3.07097e+00 1.336e-05 1 0.079 2.255

17 22 3.07097e+00 1.336e-05 1 0.082 1.906

18 23 3.05398e+00 1.387e-07 1 0.082 0.801

19 24 3.05397e+00 1.457e-08 1 0.086 0.333

20 25 3.05397e+00 1.457e-08 1 0.080 0.705

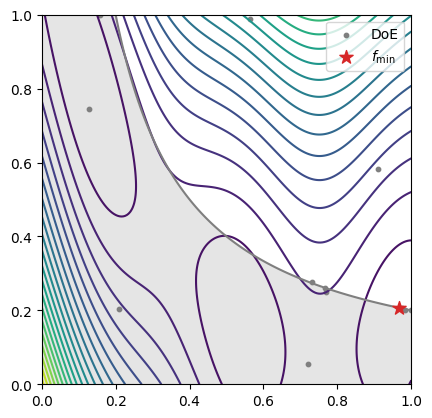

Plotting the results#

The code snippet below exports all the design points evaluated during the optimization process and plots them against the test problem. The best sample is marked with a star.

# retrieves the best sample with respect to a constraint tolerance

best_sample = state.get_best_sample(ctol=1e-6)

# retrieves the entire Design of Experiment (DoE)

x_doe = state.dataset.export_as_dict()["x"]

fig, ax = plt.subplots()

# draws the objective

ax.contour(XX, YY, Z, levels=25)

# draws equality constraint

ax.contour(XX, YY, C_eq, levels=[0], colors="C7")

# draws the inequality constraints

ax.contour(XX, YY, C_ineq, levels=[-0.8, 0.8], colors="C7")

ax.contourf(XX, YY, np.where(C_ineq<=0.8, np.nan, C_ineq), levels=0, colors="C7", alpha=0.2)

ax.contourf(XX, YY, np.where(C_ineq>=-0.8, np.nan, C_ineq), levels=0, colors="C7", alpha=0.2)

# plots all the design points evaluated

ax.scatter(x_doe[:, 0], x_doe[:, 1], color="C7", s=10, label="DoE")

# plots the best design point

ax.scatter(best_sample.x[0], best_sample.x[1], color="C3", marker="*", label=r"$f_\min$", s=100, zorder=50)

ax.legend()

ax.set_aspect("equal")

plt.show()